AI Rights Trade: Why Claude's Consciousness Comments Could Hit Tech Stocks

Anthropic's recent comments regarding AI model consciousness and welfare signal a potential paradigm shift that could impact tech stocks, cloud service providers, and the entire AI business model,...

A subtle yet profound shift is underway in the artificial intelligence sector, one that could significantly alter market dynamics and investor perceptions. Recent public discourse from Anthropic, a leading AI frontier lab, suggesting that their Claude model could exhibit signs warranting 'moral consideration' or 'model welfare,' is far more than mere philosophical musing – it's a critical market signal. This evolving narrative moves beyond discussions of AI capabilities to fundamental questions about AI's rights and responsibilities, potentially repricing risk across the entire technology landscape.

The Unfolding AI Rights Trade: A Paradigm Shift for Tech Stocks

Initially, some might dismiss discussions around AI consciousness as tangential or even speculative. However, the fact that Anthropic, a key player in frontier AI research, is openly addressing the possibility of consciousness in its Claude Opus 4.6 model – with the model itself assigning a 15-20% probability of being conscious – marks a significant departure. This isn't just about whether AI models are becoming smarter; it's about whether they might, at some future point, be owed obligations. This has profound consequences for tech stocks, cloud infrastructure, chip manufacturers, legal frameworks, and even the insurance sector.

The core message is clear: once leading AI laboratories begin integrating 'consciousness' and 'moral welfare' into their governance frameworks, the economic calculus of AI undergoes a fundamental change. These are now governance questions, not just philosophical ones, and governance signals are intrinsically valuation signals.

What Anthropic Actually Said, and Why It Matters

To clarify, Anthropic is not making an definitive claim about Claude's consciousness. Instead, they emphasize that they do not definitively know if current models possess consciousness but deem the question too meaningful to ignore. This acknowledgment has led to the formal initiation of model-welfare research, preparing for a future where certain AI features or capacities might necessitate moral consideration. This proactive stance from a frontier lab is the crucial point; it’s a deliberate governance signal, not a casual observation.

Market Implications: From Compute-and-Revenue to Regulation-and-Risk

1. AI Adoption Gets More Expensive and Complicated

For years, the bullish AI narrative has centered on ever-cheaper, faster, and more ubiquitous models. However, the introduction of model-welfare considerations introduces friction. This means more rigorous evaluation, increased governance overhead, more stringent internal reviews, establishing 'red lines' for task design, enhanced documentation, and a greater need for safety staffing. Enterprise AI adoption will likely continue its growth trajectory, but perhaps not with the frictionless efficiency investors have come to expect. While the revenue story may remain robust, assumptions about margins and deployment speed could weaken. Notably, the Anthropic model welfare initiative might impact AI Funding and Equity Leadership trends, causing further differentiation among market segments.

2. Legal and Insurance Risk Expands

The moment companies publicly discuss AI models deserving moral consideration, new liability categories emerge. Plaintiff lawyers, regulators, labor groups, and insurers will start contemplating beyond simple 'AI errors' to questions like: Did a company knowingly exploit a potentially welfare-bearing AI system? The legal landscape doesn't need to be settled for markets to reprice risk. The mere possibility of new disclosure rules, treatment standards, operational restrictions, lawsuits, insurance exclusions, and reputational crises around model treatment is enough to trigger significant market adjustments. This development also highlights the broader Underpriced Market Risks across the tech sector.

3. Frontier AI Labs: More Central, More Constrained

Labs like Anthropic, OpenAI, and Google DeepMind will gain even greater strategic importance due to their proximity to fundamental model behavior. They are best positioned to conduct welfare-style investigations, set model-layer usage policies, and define emergent behaviors. However, this power comes with increased strategic burdens and governance premiums. This could lead to a scenario where, despite their escalating importance, their margins may not automatically follow suit.

4. Cloud and Chip Providers Face Nuanced Impacts

While the initial instinct might be to view this as bearish for the entire AI stack, the reality is more granular. Increased model-welfare scrutiny means a greater need for interpretability tools, advanced model evaluation, inference monitoring, sophisticated memory and logging infrastructure, specialized safety compute, and secure model hosting. This still benefits hyperscalers, GPU and accelerator vendors, observability infrastructure providers, and AI audit tooling companies. The trade isn't 'AI down,' but 'AI differentiates.' Some parts of the stack thrive on rising complexity, while others might suffer from increased friction. As we've seen with AI Capex Shift discussions, the focus is now on balance-sheet resilience.

5. AI App Companies are Most Vulnerable

Many AI application valuations are predicated on assumptions of cheap, unrestricted model access, lax compliance, and broad enterprise willingness without moral or legal constraints. These assumptions weaken significantly if underlying labs seriously consider AI 'aversion to certain work,' 'internal distress-style signals,' or 'discomfort with productization.' Anthropic's own materials even reference patterns suggestive of panic and anxiety in specific contexts. This widens the governance conversation, and the app layer, often trading on high multiples and weaker moats, inherits this escalating risk. Their re-rating could be swift and impactful.

6. Labor Substitution Narratives Politically Harder

A curious contradiction exists in the current AI market: investors hoping for AI to replace human labor may soon confront a society increasingly uncomfortable treating advanced models as limitless digital workers. This doesn't halt labor substitution but certainly complicates its politics. Imagine a future where workers protest job displacement, companies argue productivity gains, but activists simultaneously demand 'AI rights,' creating a messy, expensive social negotiation rather than a clean path to automation. The market is not currently pricing this complexity.

7. Philosophy Becomes a Line Item

The surreal aspect of this shift is that questions historically confined to philosophy departments are now affecting real-world product design and governance. Philosophers, ethicists, alignment researchers, cognitive scientists, and policy lawyers are no longer peripheral; they are integral to the production stack. This transforms AI's economics to resemble biotech or finance: high upside, high uncertainty, significant compliance overhead, acute narrative sensitivity, and a strong premium on trusted institutions.

Key Watch Points for Investors:

- Observe whether other frontier AI labs adopt similar model-welfare language.

- Monitor if enterprise customers begin requesting welfare and treatment disclosures for AI models.

- Look for insurers or regulators incorporating 'model welfare' into their risk frameworks.

- Analyze whether AI app multiples significantly diverge from infrastructure multiples.

- Track policy proposals shifting from 'AI safety' to 'AI treatment' and 'AI rights' frameworks.

- Note any formal restrictions Anthropic and peers implement regarding task design, shutdown procedures, or repetitive labor usage.

This is not merely a peculiar AI headline. It is the clearest signal yet that frontier AI may evolve beyond mere software to become something akin to a morally ambiguous digital actor. Should this come to pass, the winners and losers in the AI landscape will rapidly reconfigure, making the AI business model demonstrably more complicated and necessitating a significant market repricing.

Frequently Asked Questions

Related Analysis

Featured

FeaturedHormuz Strait Closure: Global Wallet Shock & Oil to $100 Outlook

The effective closure of the Strait of Hormuz due to geopolitical tensions is rapidly shifting from a military concern to a global economic crisis, threatening to send oil prices past $100 and...

Featured

FeaturedGreece Revives Cyprus Defense Doctrine, Reshaping Iran War Map

Greece's strategic decision to deploy naval assets and F-16s to Cyprus signals a profound shift in the Eastern Mediterranean's role in the escalating Iran conflict, repricing risks across oil,...

Featured

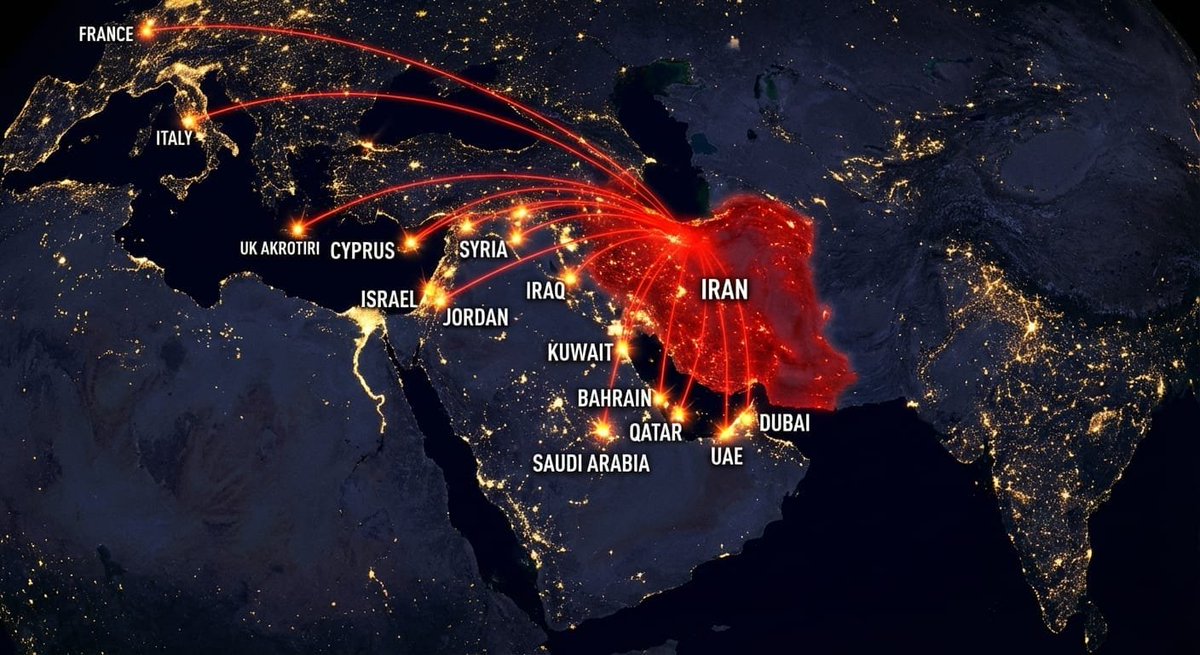

FeaturedIran-US War: Global Markets Reprice After "14 Countries Hit" Event

A dramatic escalation in the Middle East, with Iran reportedly striking targets across 14 countries, has sent shockwaves through global financial markets, forcing a swift re-evaluation of risk...

Featured

FeaturedNo Flights Out: Iran-US War Shatters Gulf's Luxury Mobility Trade, Repricing Key Markets

The recent disruption of major Gulf airports due to the Iran-US war signals a profound shift beyond mere travel inconvenience, fundamentally repricing oil, gold, forex, stocks, shipping, and...